Human face reconstruction

Project 1 (ESR1): Distributed optimization of biokinetic models based on large 4D sequences (IST Lisbon & 3Lateral)

Companies working in production of videogames or virtual reality devices use biokinetic models of human form in computer graphics field. In particular 3Lateral is posing a strong focus on the realistic reconstruction of human face expressions. This is done based on a database of biokinetic models through 3D and 4D (spatial or space-time) scanning of real human faces and accurate registration of this data for purpose of statistical modeling and machine learning. Biokinetic model or “Rig Logic”, as it is called in the industry, is composed by a series of vectors representing muscle contractions, appearance of which is obtained through scanning, and layers of corrective vectors. In order to be compatible with real time rendering engines, the model can be seen as hand crafted non linear principal component analysis (PCA) and it models the deformations of the human face from parameters to mesh. Given the function curve for each parameter, animation of the model can be created in order to render the appearance of the human face. Evaluation of Rig Logic parameters based on 4D data is done through a series of both linear and non-linear optimizations. 4D data sets can be extremely large (millions of frames) and often Rig Logic has to be calculated in real time (max 200ms delay from event to render). For this reason the student’s main goal will be to provide and implement innovative techniques of distributed optimization on a GPU cluster in an efficient way, in order to enhance human face and body representation. The optimization techniques will be developed also for the new geometric models developed in Project 2 to enhance face representation.

Project 2 (ESR2) : Mathematical morphology for the prediction of face expression transition and emotions (Univ. of Milan & 3Lateral)

A second challenge connected with human face reconstruction concerns a realistic description of human emotions from face expressions. Human emotions are related and recognizable by specific expressions of the face, which correspond to the simultaneous contraction or release of a set of facial muscles. The contraction of the muscles can be codified with numbers between 0 and 1, called Action Units, which represent the level of engagement of a specific set of facial muscles. The evolution of Action Units in a video of a human subject can be estimated through specific software for image analysis and face recognition.

The goal of ESR2 is to establish and mathematically describe correlations among data sets representing facial muscle contractions applied in banal interpretation of facial expressions (such as neutral, happy, sad, disgusted, hangry, afraid face), and to register these data sets on an equidistant timeline. This goal implies general mathematical research focused towards development of a new model extraction algorithm possibly by exploiting functional statistics.

Project 3 (ESR3): Stochastic Geometric modelling and 3D image analysis for human face prostheses (Univ. of Milan & uROBOPTICS)

Extracting usable parametric geometry models from point data is an open challenge. Assuming a set of point clouds obtained from instances of an (unknown) parametric class of 3D objects, the challenge is to recover the underlying parametric surface model, knowing that point clouds are corrupted with noise, missing data, and are non-uniformly sampled with different densities. Prior work, describing human lower legs, was capable of achieving the required objectives with a dataset consisting of a hundred human scans. In this project, surface models with high intrinsic curvature will be considered, requiring both different modelling techniques and the creation of much larger real-world datasets. Registration of these datasets in a common reference frame, prior to model extraction, is a common pre-processing operation, consisting of identifying shared features, which can be pre-aligned. The industrial goal for this student will be to recover models of human face features (e.g. ears, noses) for the prosthetic industry, which require high quality colour models. In this setting, model instantiation is constrained by the border conditions of the existing face shape and texture to which the generated model will need to fit.

Financial Processes

Project 4 (ESR4): Credit scoring and statistical prediction of credit default (IST Lisbon & CIF, SDG)

The variety and volume of financial data is ever-expanding. In the past decade, information coming from traditional sources (e.g. exchanges, databases of financial institutions, or commercial data providers) became comparable in both size and velocity to the one available in social media, mobile interactions, server logs or customer service records. Companies are increasingly turning to data scientists to seek a meaningful relationship with these vast amounts of data. The large number of decisions involved in the consumer lending business makes it necessary to rely on models and algorithms rather than human discretion, and to base such algorithmic decisions on “hard” information, e.g., characteristics contained in consumer credit files collected by credit bureau agencies. Models are typically used to generate numerical “scores” that summarize the creditworthiness of consumers and may estimate the probability of credit default. This project is focused on the improvement of existing credit scoring models and of the related prediction of default by combining the “traditional” databases of companies and individuals (financial reports, behavioural data, bank data, credit bureaus, etc.) with loan servicing data (days in arrears, collateral, loan-to-value, etc.) and with other available sources, in particular those available through open social media activity or transaction data. In the first phase, suitable measures of relevance of the considered variables will be introduced, through modern techniques of machine learning and data mining. In the second phase, new statistical models, more interpretable than the “black box” techniques cited above, will be introduced, and then optimized to come up with optimal risk-based financial funding procedures. Depending on the introduced measures and risk functions, the optimization problem to be solved can be non-convex.

Project 5 (ESR5): Scoring individual financial investment potential (TU/e & AcomeA)

Modern Financial Technology (fintech) companies offer, besides traditional systems of investment, also online applications and services where single investors may deposit any amount of money, which can be rapidly claimed at any time. Such online systems are often used by small investors like students, young people, etc. as “piggy banks” or by individuals with a larger capital, in parallel to other investments, to diversify their portfolio. The aim of this project is to identify customers who have a financial potential larger than their actual investments, to apply targeted marketing strategies. The financial behaviour of individuals is related to their attitude to risk and to save money, and to their way of living, etc. In this project, we will retrieve this information via socio demographic data, geolocalized data, analysis of social networks, by defining relevant variables and suitable measures and distances that may quantify the possible features of interest. Then ESR5 will develop a feature extraction procedure to reduce the problem dimension and identify the more relevant variables to describe the financial behaviour of the individuals. The identification of such variables is crucial and challenging, due to the heterogeneity of information to be considered. Based on the selected variables, the customers having an unexploited financial potential will then be identified via quantile regression techniques.

Production Systems

Project 6 (ESR6): Prediction of failure events in complex productive systems (TU/e & SDG)

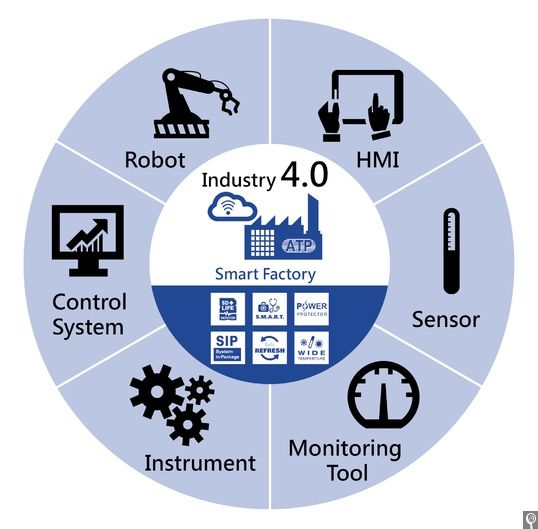

With the advent of Industry 4.0, many current industrial processes are subject to continuous monitoring of their efficiency by sensors, which provide numerical data of various types, with a high frequency. The data gathered by these systems have various uses. Two important but difficult objectives are (1) prediction of events that can impair (damage) the process output, and (2) prediction and optimization of the quality of the process end-product. However, the availability of vast amount of data leads business users to even more ambitious objectives: (3) understanding the causes of failures and varying quality, and (4) taking actions to improve the process, to avoid failures or to reduce the failure rates.These goals are very challenging. Good and reliable prediction usually requires both linear and nonlinear models and algorithms, which are often difficult to interpret, so that causal relationships remain unclear. On the contrary, interpretable models often are unable to represent complex, nonlinear and dynamic relationships. Therefore, the student will develop efficient models that are able to predict with a good reliability the occurrence of process impairment, and to tell which features mostly contribute to the damage in the given conditions. Three key mathematical ingredients that we will use are: (a) compositionality: building complex models from simpler components; (b) model reduction: simplification of the model, so that it can be simulated and the parameters identified, without losing much accuracy;(c) causality: understanding what is causing what and take improvement-oriented actions. Current trends for impairment prediction frequently use deep learning models, which are flexible and often provide good results, but usually very hard to interpret. In this project, the student will introduce innovative more interpretable models. Such models will support the development of innovative methods for process optimization and control by the student enrolled in Project 7.

Project 7 (ESR7): Big data optimization for network adjustment (Univ. of Novi Sad & Sioux LIME)

Digitalization of many public services includes machine learning of huge paper records consisting of text, number, sketches etc. The obtained results are in general usable but still subject to errors that need to be corrected, either by human intervention or in some automatic way. When the size of problem is really large human intervention is too costly and complicated procedure and hence an algorithmic procedure is needed. The problem to be considered by ESR7 can be described as a network adjustment problem. Namely, given a set of digital sketches obtained by a machine reading process the task is to determine the exact position of points in a plane. Thus the problem can be considered as a noisy optimization problem of very large dimension (RO3). Several important question arise in any attempt to solve the problem. First of all, it is a noisy problem as the measurements are prone to errors, either human errors before digitalization or machine reading errors in the process of digitalization. Then the size of problem is really large – can be expressed in millions of variables. Furthermore, the structure of the problem is sparse but without any exact pattern, i.e. the sparsity is of random nature (RO1). Further difficulty arise from the fact that the problems of this kind are very likely non-convex (RO4) and thus finding a stationary point does not mean that one has found a global minimizer.

The aim of ESR7 project is to develop a method able to cope with these challenges. More specifically, an optimization algorithm for solving network adjustment problem will be proposed, theoretically analysed and implemented. The method should be designed in a way that allows parallelization or distributed implementation. Furthermore, the theoretical analysis of the method should be comprehensive and include error propagation analysis

Users Today : 0

Users Today : 0 Users Yesterday : 2

Users Yesterday : 2 Users Last 7 days : 22

Users Last 7 days : 22 Users Last 30 days : 98

Users Last 30 days : 98 Users This Month : 74

Users This Month : 74 Users This Year : 316

Users This Year : 316 Total Users : 4262

Total Users : 4262 Views Today :

Views Today :  Views Yesterday : 2

Views Yesterday : 2 Views Last 7 days : 26

Views Last 7 days : 26 Views Last 30 days : 120

Views Last 30 days : 120 Views This Month : 95

Views This Month : 95 Views This Year : 380

Views This Year : 380 Total views : 7571

Total views : 7571 Who's Online : 0

Who's Online : 0